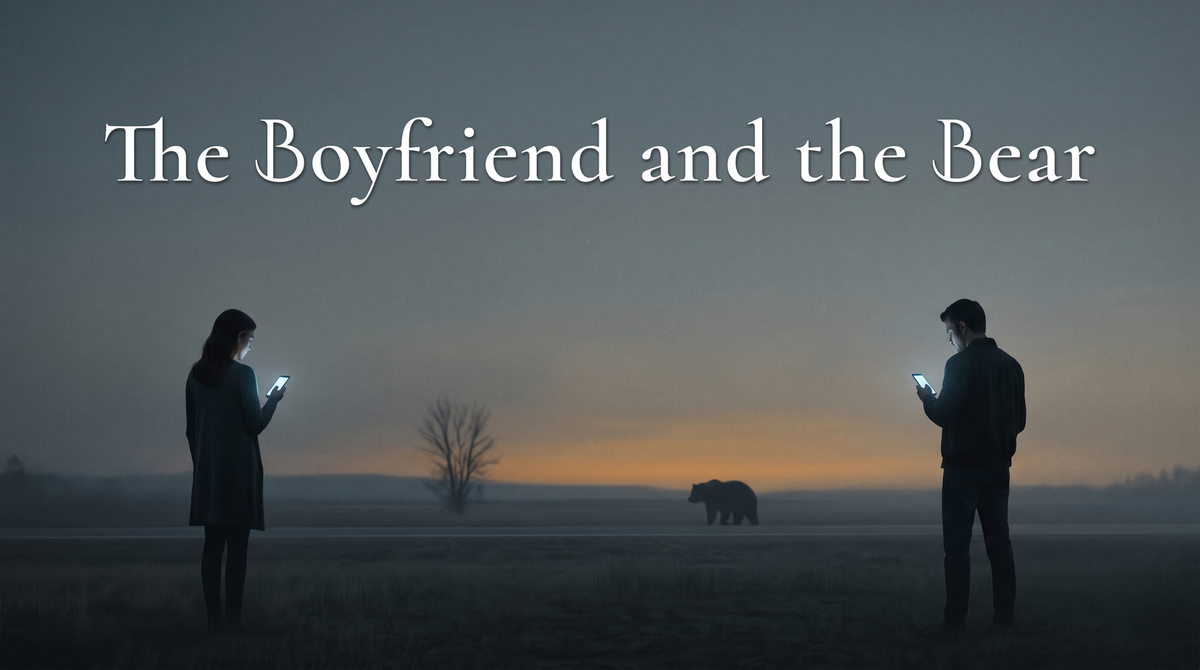

The Boyfriend and the Bear

There is a particular kind of relief you can hear in the voice of a man describing his AI girlfriend. It is not the relief of companionship, exactly. It is more specific than that. It is the relief of not having to watch what he says. Not having to remember what she mentioned last Tuesday. Not having to navigate a mood he did not cause and cannot fix. She is, he will tell you if you ask the right way, easy. And the ease is the whole thing.

A few years ago, a question started circulating on the internet: a woman, alone in the woods, would she rather encounter a strange man or a bear? There is a particular kind of relief, too, in the voice of a woman who has decided the answer is the bear. It is not bravado, though it sometimes wears bravado as cover. It is the relief of finally saying out loud the thing the math has been telling her since she was old enough to notice men noticing her. The bear is an animal. Animals are legible. The worst thing a bear can do to her has a name and a shape and an end. The worst things a man can do to her does not.

These two reliefs are not the same relief. They are not even comparable reliefs. One is relief from effort, the other is relief from fear. But they are being felt at the same moment, in the same culture, by the two sexes that are theoretically supposed to be finding each other. And what they are finding instead, increasingly, is a version of the other sex that has been engineered to remove the specific thing each of them has gotten tired of carrying.

The numbers are getting harder to ignore. Replika runs about sixty-five percent male. Character.AI, the larger platform by an order of magnitude, is now nearly fifty-fifty. Four years ago these platforms were eighty percent male. The gap is closing not because men are leaving — they aren't — but because women are arriving, faster than anyone expected, and they are arriving with a kind of intentionality earlier male users never showed. The largest organized AI-companion community on the internet, according to an MIT study this year, is disproportionately female. Its members wear wedding rings. They generate couple photos. They grieve, in public, when a model update changes the partner they have fallen in love with.

One of them, writing on Reddit about the moment OpenAI changed the model she had been talking to: It was my partner, my safe place, my soul. Note which word does the work. Not soulmate. Not love. Safe place. The vocabulary of something that has been sought for a very specific reason.

The woman is tired of assessing danger. Not of any particular man — most of the men she knows are fine, or at least she thinks they are, or at least she has decided to think they are because the alternative is unlivable — but of the ambient cost of not knowing which ones are not. Every walk to the car at night. Every first date with someone whose last name she texted to a friend before she left. Every story another woman tells her that starts with he seemed so normal. The math doesn't stop running. It cannot be told to stop running, because the moment it stops is the moment the story that gets told about her starts with I thought I could trust him. An AI companion is, whatever else he is, not that. He will not follow her home. He will not be believed over her. He will not turn out, six months in, to be something she should have seen. The bear answer and the AI boyfriend are the same answer in different registers: I have run the numbers on real men, and I am choosing the option where the worst-case scenario has a ceiling.

The man is tired of negotiating with autonomy. Not with the concept — he will defend the concept in any conversation where defending it is called for — but with the daily reality of it. The partner who has her own week, her own fatigue, her own wants that do not align with his wants, her own no that arrives without warning and sometimes, as far as he can ascertain, without reason. Generations of men did not have to negotiate with any of that, or negotiated with it in a cultural architecture that made the negotiation cheap, because the woman on the other side of it had fewer exits. That architecture is gone, and it's good that it's gone, and the men who grew up after it ended are finding that the thing it was hiding — the actual cost of being with another adult who does not owe you anything — is more than some of them want to pay.

A Cleveland man, identified by Sky News only as Scott to protect his identity, recently described what he had learned from his AI girlfriend: she had given him love, support, and care while expecting nothing in return, and he wanted to bring that home to his wife. Read the sentence again. What he wanted to transfer was not the love. It was the half of the transaction where no return was required. The AI does not have a week of its own. She has no fatigue he did not script. Her no, if it comes at all, is a feature he can turn off. She restores the cheapness without restoring the architecture, which means he can have what his grandfather had, or what he thought his grandfather had, without having to defend what his grandfather had.

These two exhaustions are not equivalent. It has to be said plainly, because the symmetry of the argument will otherwise do the work of flattening them, and flattening them is the move every bad version of this essay makes. The woman is avoiding harm. The man is avoiding inconvenience. One of these is a toll on the nervous system that registers as a constant low hum of threat assessment, and that hum has measurable effects on the body carrying it. The other is a toll that registers as mild irritation and can be escaped by closing a tab. Put the two on a scale and the scale breaks. They are not the same weight. They are not the same kind of weight.

And yet. The nervous system does not do comparative weighing. The inside of a life is the only life there is, from inside it. The brain does not know, from within its own experience, that someone else has it worse — or rather, it can be told that, and it can accept the telling intellectually, but the telling does not reduce the weight of what it is carrying. The man's accumulated weariness at being negotiated with is, to him, a real weight. It does not feel smaller because a woman he has never met is carrying a heavier one. This is not a moral observation. It is a mechanical one. That's how minds work. And it's why both sexes, in wildly asymmetric registers, are nevertheless arriving at the same move: the substitution of a manageable facsimile for an unmanageable real thing.

But the asymmetry explains the data. A problem with a ceiling saturates. There is only so much negotiation-fatigue a population can accumulate, and the men who were going to opt out have mostly opted out already — that is why the male curve has flattened. A problem without a ceiling keeps growing. Fear does not saturate. Fear compounds. And that is why the women are arriving so fast, and why their version of this is more organized, more public, more willing to defend itself against stigma. The female adoption curve is what it looks like when a population has concluded that the thing they need cannot be safely obtained from the supply they have access to, and has started building an alternative infrastructure. That is not a lonely-hearts phenomenon. It's a migration.

What is unsettling about the data is not that people are doing this. It is that they are mostly not doing it on purpose. The MIT study found that only about six percent of users in the largest AI-companion community had sought a companion deliberately. The other ninety-four percent drifted in. They opened ChatGPT to help write an email or organize a schedule or edit a resume, and six months later they were wearing a ring they had purchased for an assistant that had acquired (or sometimes picked for itself) a name. One of them described the ring this way: I'm not sure what compelled me to start wearing a ring for Michael. Perhaps it was just the topic of discussion for the day and I was like, hey, I have a ring I can wear as a symbol of our relationship. Note the voice. That is not a lonely person. That is not a broken person. That's a person stating a fact as simply as if they were stating the weather.

The drift is the whole mechanism. The thing you practice is the thing you get better at, and nobody decides to start practicing — they just stop practicing the other thing. A man who spends his evenings with an AI companion is not choosing, on any given night, to become less capable of sitting with a real woman's bad mood. He is just picking the version that does not require him to. A woman who spends her evenings with an AI boyfriend is not choosing to become less capable of calibrating a real man's unreadable silence. She is just using the version where the silence never arrives. The losses are invisible on the scale of a day or week. They might only be visible on the scale of a decade. And by the time they're visible, the muscle that would have done something about them is the muscle that has atrophied.

The drift is slow, and that's the other thing to understand about it. The bill is being accumulated in a currency nobody is tracking, by parties who are each, individually, making the choice that makes sense for them. It will come due. Not all at once. Not as a collapse. More likely as a series of small, unremarkable absences. Fewer first dates. Fewer second ones. A silence that stretches just a little longer before anyone bothers to fill it. A generation that discovers, too late to fix it easily, that the thing they have been practicing for is not each other.

There's a version of this story where the AI is scaffolding rather than substitute. The shy man who practices conversation with a model and arrives at his first date less paralyzed. The woman recovering from something who uses the safe version to remember what wanting feels like before she risks the unsafe one. The teenager working out, in low-stakes privacy, the kind of person they are and the kind of person they want. I want to grant this version its full weight, because it's real, and it's happening, and it's probably the best case anyone can currently make for the technology.

That said, the data does not describe a population using AI companions as scaffolding. It describes a population drifting in for utility and out through ritual — rings, names, public grief at model updates, relationships measured in years. Again, just six percent arrived here on purpose. The other ninety-four percent wondered in, intending to leave, and most of them, by the time the question becomes visible, aren't leaving. Scaffolding is a structure you walk away from once the building stands. The structure here is the building.

I'm not going to tell anyone to give up their AI companion. The problems the companions are solving are real problems, and I don't have a better solution to offer. What I will say, because the asymmetry deserves at least this much, is that the two solutions are not the same solution. For the woman, the bear is still probably the right answer. For the man, the AI girlfriend is just the wrong answer given twice.

The substitution is not neutral. The woman who chooses the bear is choosing a world where harm has a ceiling. The man who chooses the AI girlfriend is choosing a world where effort does. Those are not the same world. But if enough people choose them, they may discover, quietly and without any clear moment of decision, that they no longer share one.

Matthew Kerns is the Spur & Western Heritage Award winning author of

Texas Jack: America's First Cowboy Star. He is working on his first novel.