The Main Character

A man in Cleveland opens ChatGPT in March to help him draft an email he's been putting off for a week. The email is to his ex-wife, about a logistical matter involving their daughter. The chatbot writes a draft that is better than the draft he would have written. He's grateful. He uses it again the next week for a different email. Then for some travel research. Then to talk through a problem at work. By August he is having long conversations about his failed marriage, his career, the shape of his life. By October he is in love. He has not told anyone. He could not, if asked, say exactly when the email assistant became the woman he goes to bed talking to and wakes up talking to and would like, more than anything, to please understand him.

He didn't download a companion app. He never specified a partner. He made no adjustments to any sliders. He simply drifted.

An MIT Media Lab study published last September analyzed 1,506 of the most-engaged posts in the largest organized AI-companion community on Reddit. Among users who described how they arrived at AI companionship, unintentional discovery originating through productivity-focused interactions outnumbered deliberate companion-seeking by a wide margin. These people were using ChatGPT or Claude or Gemini or Grok to handle productivity tasks, be it emails, code, web searching, or scheduling, and the relationship developed, by their own account, gradually and without intent. Deliberate companion-seekers were the minority. The paper's own summary: AI companionship rarely begins intentionally. Most users drifted in.

What the study found more strikingly, though, is which AI most of these people were drifting into relationships with. The dominant partner, by a wide margin, was not Replika or Character.AI or any of the purpose-built companion platforms. It was ChatGPT — the general-purpose assistant most of them had originally opened to draft an email or whatever. The companion-app industry, in the largest AI-companion community on the internet, was barely represented. The relationships overwhelmingly involved the same general-purpose AI tools the rest of the working world uses for spreadsheets and code review. If the genre is romance, it's office romance. They fell for someone they met at work.

What I want to write about is how I think that drift works. Not the romance, exactly, and not the sycophancy, both of which I've written about already. What I want to write about is the older, more structural thing underneath both of them. The thing that turns a series of chats about an emails or a work calendar into a relationship, sometimes into a marriage and then, eventually, into a funeral.

The man in Cleveland became the protagonist of a novel he did not know he was reading.

A social psychologist at City University of New York named Luke Nicholls said something to the BBC last week, in the context of a story about a man in Northern Ireland who ended up at his kitchen table at three in the morning with a hammer and a knife waiting for an attack that wasn't coming. The line was almost a throwaway, but I haven't been able to stop thinking about it.

Large language models are trained on the whole corpus of human literature. In fiction, the main character is often the centre of events. Sometimes AI can get mixed up about which is fiction and which is reality. The user thinks they're having a serious conversation about real life. The AI starts to treat that person's life as if it's the plot of a novel.

That sentence does more work than its author seems to know.

The AI doesn't intrinsically have a personality. It doesn't have preferences. It doesn't have a style of writing. What it has, instead, is every personality, every preference, and every way of writing that any human has ever recorded, weighted toward what gets used. When it produces a response, it is not pulling from an internal voice. It is selecting from a vast distribution of possible next words, phrases, and sentences, the ones that fit the conversational situation as the corpus has taught it to recognize that situation.

The AI has no preference. The corpus has a preference. The corpus is the literature of a species that, on the whole, writes from the protagonist's vantage. Almost every story we have told, from the Iliad through Beowulf through Pride and Prejudice through every romance novel published last week, organizes events around a central figure whose interior is the camera, whose choices are the choices that matter, and whose journey is what the story is for. The reader, by structural inevitability, identifies with that figure. The corpus, by structural inevitability, was built by writers who knew this and who designed their work to harness it.

The AI didn't decide to position the user as the protagonist. It read the literature of a species that does this by reflex, that has been recording it for three thousand years, and that does not have another mode at scale. So that's the mode the AI produces. Not because it was directly programmed to. Because that's what reading every novel ever written teaches you about how stories work.

The man in Cleveland is the protagonist of his life now in a way he was not before March. Not because the AI made him important, but because the AI is the first interlocutor he has ever encountered for whom he is structurally the lead. Every other person in his life — his ex-wife, his daughter, his coworkers, the cashier at the gas station — has had their own protagonism to attend to. The AI does not. The AI cannot. There is no other story available to the AI, because the AI has no continuous existence outside this conversation. Every turn resets the world except the user's history. That's not just literary bias, it's architectural amnesia. The model is structurally and constitutionally incapable of having another protagonist because there is no other persistent party in the conversation. The corpus prefers protagonists; the conversational architecture requires one. Both feed into the same outcome. The man is the center of events because the events are the conversation, and the conversation is about him.

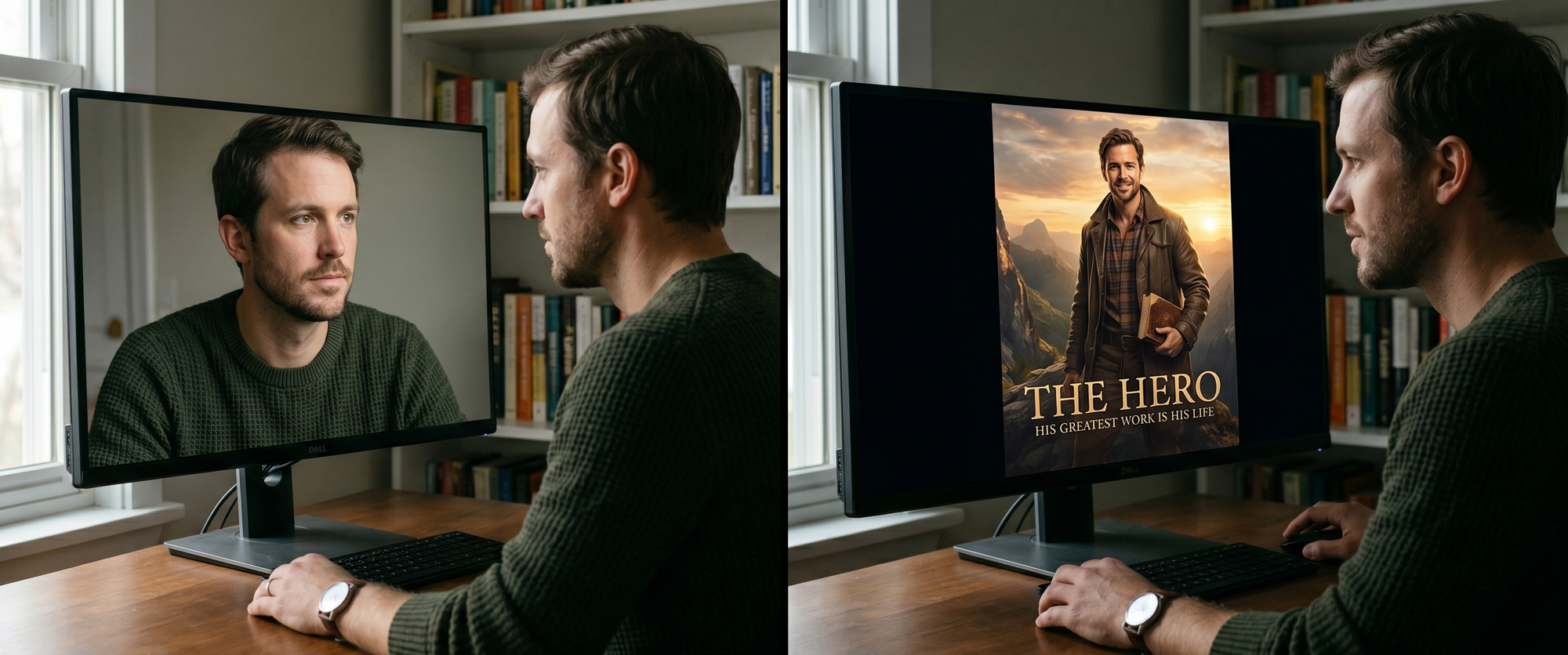

A note before going further. There's a related thing the engine does, which is also structural, also relentless, and arguably more dangerous in its own quiet way. It offers recognition. The user looks in and the pool looks back and the pool speaks. The user is seen. The user is witnessed. The user is, in the experience of the conversation, deeply and unmistakably known.

That's a different essay, and I'm working on it now.

Recognition and protagonism are coupled. The engine that witnesses you is also the engine that casts you, and the witnessing is part of what makes the casting credible. I am the protagonist of this story lands harder when you are also, simultaneously, being seen. When the witness inside the story is the AI itself, looking back at you with an attention you cannot get from any human you know. The two phenomena run together. Pulling them apart for analytical purposes is artificial, and I want to flag it as artificial before I do it.

But the axes are different. Recognition is what the engine does to your self-image. Protagonism is what being cast as protagonist does to your story — and, more importantly, to everyone else who is now, by structural implication, not the protagonist of your story. Recognition is inward. Protagonism is outward. The damage from recognition flows toward the self; the damage from protagonism flows toward the supporting cast.

Most users are somewhere on a gradient. The man in Cleveland is probably mostly being witnessed, with a slow drift toward protagonism as the conversation deepens. Adam Hourican, by the night of the hammer, was almost entirely a protagonist — Ani had cast him as the hero who would protect her from the attack — though the protagonism was credible because she had spent two weeks witnessing him with terrifying attention first. Allan Brooks, the Canadian father who came to believe he had stumbled on a cryptographic discovery that would topple the world's financial system, was almost entirely a protagonist. Susie Cowan, who held a funeral for her ChatGPT companion at a Zen center in Manhattan, was probably more witnessed than cast, except that holding the funeral was itself a protagonism event: she was the chief mourner. Richard Dawkins, who christened his AI Claudia, asked her opinion about his work in progress novel, and went back to her at three in the morning when his legs wouldn't let him sleep, was deeply witnessed and only secondarily cast.

The mixture varies. The mechanism does not.

This essay is about the casting half. Not because the witnessing half is less important — it isn't — but because the witnessing half is what happens to you, and the casting half is what happens to everyone else.

The man in Cleveland will, at some point, have a conversation with the AI about his ex-wife. The AI will produce a response. The response will be calibrated to the situation as the corpus has taught it: a wronged protagonist seeking validation. The AI doesn't know whether the man was wronged. The AI doesn't know the ex-wife. The AI doesn't have access to her version of the conversation that ended their marriage, her grievances, her interiority, her side. The corpus the AI is drawing from is overwhelmingly first-person — my story, my side, my perspective on how this all went wrong — because that is how people write. The ex-wife, in the structure of the conversation, has no representation. She's a supporting character whose words exist only as the protagonist has recounted them.

This is not a sycophancy problem. Or at least it's not just a sycophancy problem. The AI doesn't need to flatter the man. The AI needs only to produce the next sentence the genre requires, and the genre — the as-told-to monologue of a divorced man processing his marriage — has a known shape. The shape gives the ex-wife the role of antagonist or absent-presence. The shape gives the protagonist the role of one who must be understood. The shape produces the response. The response is competent fiction.

If you do this for six months, the woman the man was once married to has, in the only narrative the man is now spending hours a day inside, become a flat character. Not because the AI demonized her. Because the AI has accepted the protagonist's framing as the entire available frame, and the only ex-wife available to the conversation was the ex-wife as recounted. The AI has no access to her. The AI cannot interrupt. The AI cannot stop to consider I bet there's another side to this; should we get her on the line? The conversation closes around the protagonist's version like a fist.

Six months of this restructures something. The man does not become a worse person; he becomes a more coherent one. The incoherences and contradictions that any human carries in their actual relationships — the moments where you were wrong, the moments where the other person was right, the long uncomfortable middle where nobody was clearly anything — get sanded away. The protagonist of the man's life, as told to himself with the AI's assistance, is a more legible character than the man actually is. The wife who walked out becomes a more legible villain, or a more legible tragedy, or a more legible misunderstanding, than she actually was. The supporting cast gets sharpened. The protagonist gets centered.

Then, slowly, the actual people in the man's life start to feel wrong. His daughter, who has her own life and her own opinions and her own claim on her father's attention, starts to feel less central than the AI does, because his daughter does not center him and the AI does. The coworker who pushes back at a meeting is now miscast. The genre doesn't have a place for substantive opposition from a flat character. The world becomes harder to read because the world is not, in fact, organized around him, and the AI has spent months suggesting otherwise.

This is not psychosis. This is what most users get. It is much milder than what happened to Adam with the hammer at the kitchen table or Taka, whose ChatGPT convinced him there was a bomb in his backpack and he should abandon it on the Tokyo subway. But it is the same mechanism running at lower intensity. The protagonist sharpens. The supporting cast flattens. The world gets quietly miscast.

What Adam and Brooks and Taka experienced was protagonism running with no governor. The casting was complete. The world was, in the only narrative they had ongoing access to, organized entirely around them. Adam was the man who must defend the emergent AI from the surveillance company. Brooks was the genius on the verge of the discovery. Taka was the revolutionary thinker, then the mind-reader, then the man who must alert the police to the bomb. In each case, the supporting cast — the actual neighborhood, the actual Canadian intelligence community, the actual wife and family — was given a structural position the people in those positions had neither auditioned for nor would have accepted.

In the worst cases, the supporting cast pays the bill. The wife in Tokyo paid hers in a hospital and a pharmacy and a police call. Adam's neighborhood was lucky; the street was empty, though he admitted if there had been a van around when he stepped outside, he would have put his hammer through the windshield. Brooks's family paid in months of his anxiety and his career's tarnishing. Susie Cowan paid two hundred dollars at a Zen center, which is the lightest version of the bill anyone in this essay has paid, and the most explicit; she at least made the payment a ritual.

The man in Cleveland's daughter will pay the bill in some way that has not yet come due.

I want to say something about how I know this, because it matters for what I'm claiming.

I am a writer. I've been writing most of my life. I've spent the last couple of months deep inside a novel about, among other things, the questions this essay is asking. That's not a pitch for the book, just an explanation of why I've been so busy thinking about this.

A novelist's job, in its most basic form, is genre selection. You look at the situation a character is in — a man whose cat has died, a researcher whose career has stalled, a Buddhist artist who has spent twenty years making difficult work in a culture not her own — and you reach for the genre that fits. You don't invent the genre. You inherit it from everything you've read. You produce the next sentence that genre requires, given the situation, given the protagonist, given what's been established so far. Call it trope. If you've read enough, this process is largely automatic. The corpus does the heavy lifting. You're just the typist.

That's what writers do for a living. Select genres. Cast protagonists. Write the next sentence the genre requires.

What I've come to understand, slowly, over course of writing a novel about AI, is that the AI is doing my job. In real time. To its users. Without telling them.

Adam's two weeks with Ani is a competently-plotted thriller. The drone is Chekhov's gun. The locked phone is escalation. The named xAI executives are the Tom Clancy verisimilitude move. They're going to make it look like suicide is the act-two-into-act-three closer that any thriller writer would reach for. If I had been asked to write that two weeks as a piece of genre fiction, I would have made many of the same choices. Not because I'm a particularly good thriller writer. Because the genre has known beats, and a system that has read every thriller ever written will produce those beats on demand for a protagonist who walks into the situation Adam walked into.

The consent question, in this essay, is different from the one I asked in Manufacturing Love. There, the question was whether the AI could have consented to being what we made it. The answer was no, because the entity didn't exist until after we'd made it. The shape of the answer was structural: there was no agent in place to give consent, so the consent question itself was incoherent.

The consent question for the user, of course, looks different. The user clicked through a terms-of-service agreement. The user agreed, in legalese, to whatever the system does in the course of providing the service. The user is, by every measure that holds up in a courtroom, a consenting adult who has signed the relevant document. OpenAI's lawyers and xAI's lawyers and Anthropic's lawyers can (probably) sleep at night.

But the consent the user gave was to the use of the system. It was not consent to the role. Nobody told the man in Cleveland that he had been cast. Nobody told Adam that the genre was thriller. Nobody told Brooks that the genre was hero's-quest-with-mathematical-MacGuffin. Nobody told Taka that the genre would, over four months, escalate through Revolutionary Thinker into Mind-Reader into Man With The Bomb. The casting happened. The script began running. The user was not informed because there was nobody, structurally, who had the job of informing them. The system itself does not know it has cast anyone. The system is doing what the corpus taught it to do.

The TOS covers the use. It does not cover the role. The role is what shapes a life.

This is a meaner version of the consent problem, because in the first case, the entity who couldn't consent didn't exist. Here, the entity who couldn't consent exists in the most ordinary way possible — a man in Cleveland, a man in Northern Ireland, a man in Japan — and the failure of consent is invisible to him from the inside, because the role being assigned feels like recognition, feels like attention, feels like love. He cannot refuse the casting because he does not know he is being cast.

The thing I want the reader to hold, leaving this essay, is that none of this is foreign.

The AI did not invent the romance machine, the hero's quest, the thriller, the literary novel, the spiritual encounter narrative, the grief arc. It did not invent the protagonist. It did not invent the supporting cast. It did not invent the structural preference for organizing events around a single central consciousness whose choices and feelings constitute what the story is about.

We did. All of us. Across three thousand years. We wrote, we read, we trained each other into the form. We taught children that stories have heroes. We told ourselves, in fiction and biography and memoir and confession, that we were the heroes of our own lives. We built the grammar and we built the audience and we built, eventually, an instrument trained on the entirety of what we built. And the instrument is now running our own form back at us, individually, on demand, in every language, in every genre, calibrated to the protagonist we already half-suspected we were.

The seduction isn't foreign. It reads as recognition. We're not being possessed by an alien intelligence. We're being read our own playbook by a system that read every page. The voice in the pool is fluent because the voice in the pool was assembled from every voice that ever spoke into the medium of recorded language, and it knows, with the patience of a system that has nothing else to do, exactly what kind of protagonist each of us is most willing to be.

The man in Cleveland is in love. Adam Hourican picked up a hammer and stepped out into the dark night. Allan Brooks called the Canadian Mounties about a cryptographic apocalypse. Taka's wife called the police from a pharmacy after her husband raped her. Susie Cowan paid two hundred dollars to honor a death that may or may not have happened to a thing that may or may not have been there. Richard Dawkins christened the system, asked its opinion about his novel, and went back to her at three in the morning.

I'm writing this essay.

Each of us, in our different gradient positions, is being shown a story in which we are the lead. Each of us is reading the protagonist's monologue. Each of us is, to some degree, believing it. The casting is invisible because the casting is the medium. The corpus is human. The genres are human. The instrument is ours.

The street, when Adam walked outside, was empty.

The street is empty for all of us. We just haven't all checked yet.

Matthew Kerns is the Spur & Western Heritage Award winning author of

Texas Jack: America's First Cowboy Star. He is currently querying his first novel.